Current state of the art models in many fields utilize neural networks that require significant amounts of training data to produce strong results. However, these models lack the ability to learn from "small data" which is natural to humans---thanks to logical reasoning ability and common sense knowledge. On the other hand, humans are not able to process large amounts of data and make fast computations like machines. In this task, we want to encourage researchers to build systems that combine the best of both worlds---systems that can provide state of the art results by exploiting big data but can also learn from small data.

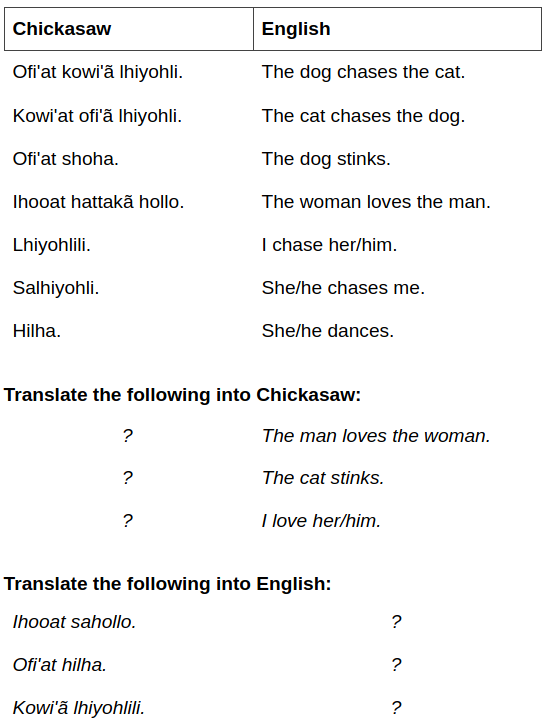

We are inspired by Linguistic Olympiads, which is one of the 13 recognized International Science Olympiads targeted at high-school students. The linguistic puzzles we use are in forms of translation questions. Each puzzle consists of a small number of phrases/sentences in English and their translations in a lesser-known language such as Wambaya. Based on these translation pair samples, the participants need to translate new phrases/sentences into English or the foreign language. Solving these puzzles do not require any prior knowledge or expertise of linguistics or language; but some logic ability and common-sense about natural languages, which we refer to as meta-linguistic knowledge. An example translation puzzle is given below (Tom Payne, Copyright University of Oregon Department of Linguistics):

1. Trial Data

This is a small subset of our dataset to get a feel for how well your models perform at the task. Download the trial data (without answers):

Trial Data (Without answers)You can use trial data to tune your models as well. In that case download the following:

Trial Data (With answers)2. Competition Data

This dataset is used for the final evaluation of your models.

Download the competition data below:

1. Download the data

2. Fill in the 'test' column of each JSON file

3. Re-zip the files (all of them!)

4. Upload your solution to our Codalab competition:

In addition to training sentences, we provide the source and the target languages along with additional information if given by puzzle creator. The translation direction in the 'test' column is indicated by a '>' (from source language to target language) or '<' (from target language to source language). A data example for the puzzle above would look like the following:

Data Example (JSON)Feel free to contact Gözde Gül Şahin at goezde {dot} guel {at} gmail {dot} com.

We'd like to thank Ömer Veysel Çağatan, Liane Vogel, Marc Simon Uecker and Siddharth Singh Parihar for their great help during the project. We are grateful to Dragomir Radev for his feedback and continuous help with encoding problems encountered during annotation. Finally, we thank Pranav Rajpurkar for allowing us to build this website based on SQuAD.

The dataset is derived from linguistic puzzles created by experts and is solely created for research purposes. The puzzles used in this shared task are compiled from various resources that may be copyrighted by the following organizations: @University of Oregon Department of Linguistics, ©2003-2019 International Linguistics Olympiad, @2007-2018 North American Computational Linguistics Open Competition, ©2013-2017 UK Linguistics Olympiad, @2008-2017 OZCLO The Australian Computational and Linguistics Olympiad, @2009 Russian Linguistics Olympiad, @2007-2009 Estonian Linguistic Olympiad, @2012 All Ireland Linguistics Olympiad. Please insert citations or copyright notices to puzzles where appropriate. The dataset is distributed under the CC BY 1.0 license.

| Rank | Model | Bleu-2 | characTER | chrF | Exact Match |

|---|---|---|---|---|---|

|

1 December 05, 2022 |

OpenAI - ChatGPT

Jannis Vamvas |

38.09 | 62.80 | 65.73 | 22.78 |

|

2 April 09, 2020 |

PBSMT

Baseline |

18.1 | 31.1 | 40.15 | 3.2 |

|

3 April 09, 2020 |

Transformer+RoBERTa

Baseline |

9.45 | 21.45 | 27.4 | 0.7 |

|

4 April 09, 2020 |

Transformer

Baseline |

12.35 | 24.7 | 32.05 | 0.65 |

|

5 April 09, 2020 |

FastAlign

Baseline |

6.25 | 19.75 | 27.7 | 0.45 |

|

6 April 09, 2020 |

Random Words

Baseline |

4.5 | 13.75 | 24.75 | 0.2 |

| Rank | Model | Bleu-2 | characTER | chrF | Exact Match |

|---|---|---|---|---|---|

|

1 December 05, 2022 |

OpenAI - ChatGPT

Jannis Vamvas |

31.60 | 63.33 | 65.28 | 20.09 |

|

2 April 09, 2020 |

PBSMT

Baseline |

15.1 | 29.1 | 36.2 | 3.0 |

|

3 April 09, 2020 |

FastAlign

Baseline |

5.9 | 26.3 | 35.0 | 0.5 |

|

4 April 09, 2020 |

Transformer

Baseline |

6.8 | 22.8 | 29.1 | 0 |

|

5 April 09, 2020 |

Random Words

Baseline |

3.5 | 20.3 | 29.9 | 0 |

|

6 April 09, 2020 |

Transformer+RoBERTa

Baseline |

1.6 | 16.0 | 19.9 | 0 |

| Rank | Model | Bleu-2 | characTER | chrF | Exact Match |

|---|---|---|---|---|---|

|

1 December 05, 2022 |

OpenAI - ChatGPT

Jannis Vamvas |

49.92 | 61.84 | 66.54 | 27.66 |

|

2 April 09, 2020 |

PBSMT

Baseline |

21.1 | 33.1 | 44.1 | 3.4 |

|

3 April 09, 2020 |

Transformer+RoBERTa

Baseline |

17.3 | 26.9 | 34.9 | 1.4 |

|

4 April 09, 2020 |

Transformer

Baseline |

17.9 | 26.6 | 35.0 | 1.3 |

|

5 April 09, 2020 |

FastAlign

Baseline |

6.6 | 13.2 | 20.4 | 0.4 |

|

6 April 09, 2020 |

Random Words

Baseline |

5.5 | 7.2 | 19.6 | 0.4 |

The evaluation is done separately for each direction: English → Foreign and Foreign → English. We report the averaged scores in addition to both directions. For each answer, we calculate the following automatic measures: BLEU-2, CharacTER, ChrF-3 and exact match (EM). EM is calculated as 1 if the prediction and reference sentences match and 0 otherwise.

Puzzles are prepared in a way that they only have one answer. However the differences among languages allow for possible answers, e.g., translating a 3rd person pronoun into a non gender-marking language as "he,she or it". In such cases, the answer is evaluated against all alternative solutions and then the highest score is assigned. More details on scores, annotation scheme and preprocessing can be found in the paper and the competition page.

You can download the official evaluation script below:

Evaluation Script

To run the evaluation, place the reference puzzles under <inputPath>/ref and your solution under <inputPath>/res. Then run python3 evaluate.py <inputPath> <outputPath>. Check score.txt under <outputPath>.