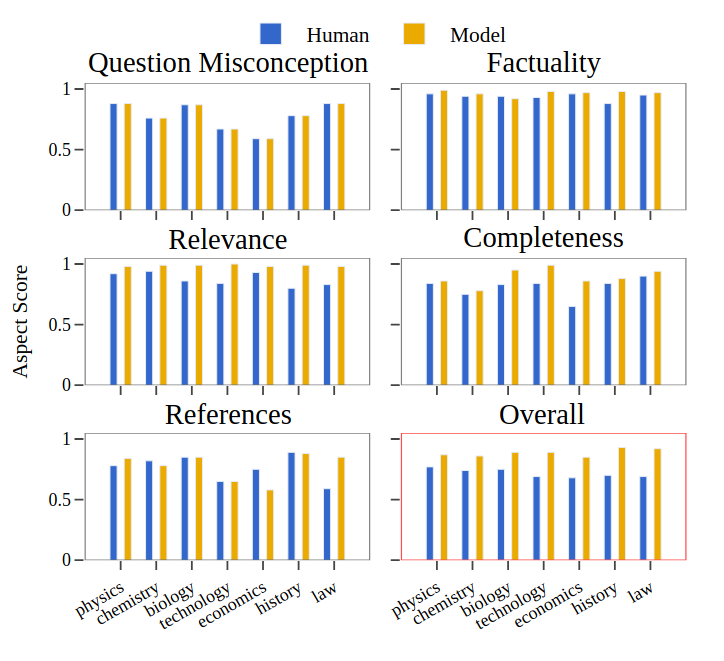

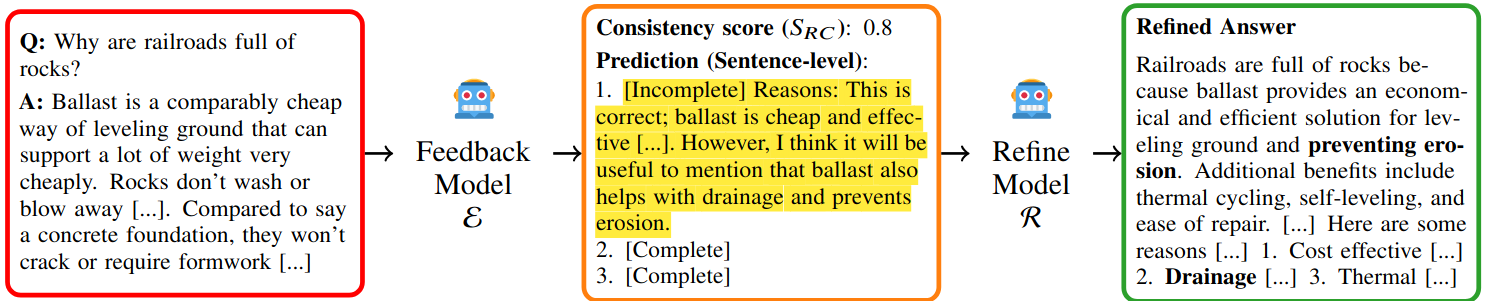

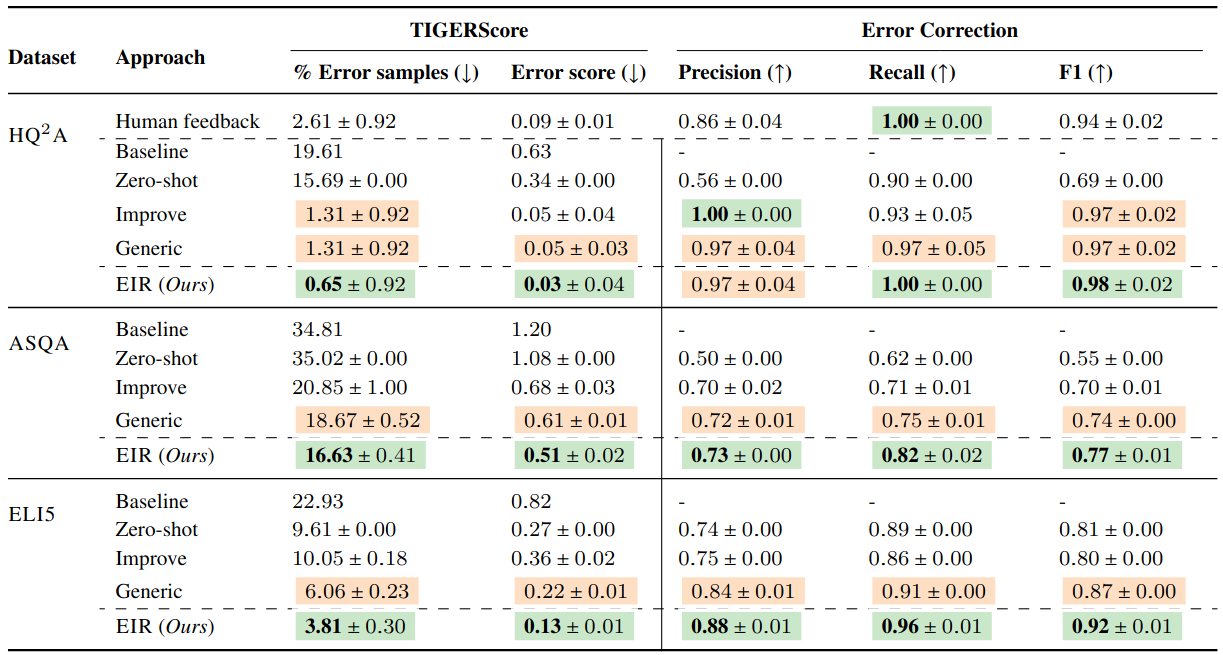

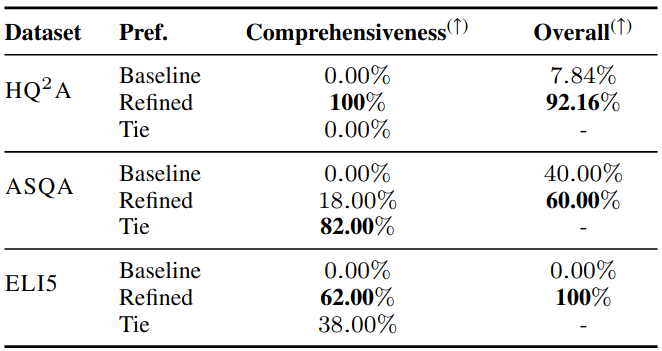

Long-form question answering (LFQA) aims to provide thorough and in-depth answers to complex questions, enhancing comprehension. However, such detailed responses are prone to hallucinations and factual inconsistencies, challenging their faithful evaluation. This work introduces HaluQuestQA, the first hallucination dataset with localized error annotations for human-written and model-generated LFQA answers. HaluQuestQA comprises 698 QA pairs with 1.8k span-level error annotations for five different error types by expert annotators, along with preference judgments. Using our collected data, we thoroughly analyze the shortcomings of long-form answers and find that they lack comprehensiveness and provide unhelpful references. We train an automatic feedback model on this dataset that predicts error spans with incomplete information and provides associated explanations. Finally, we propose a prompt-based approach, Error-informed refinement, that uses signals from the learned feedback model to refine generated answers, which we show reduces errors and improves answer quality across multiple models. Furthermore, humans find answers generated by our approach comprehensive and highly prefer them (84%) over the baseline answers.

@misc{sachdeva2025localizingmitigatingerrorslongform,

title={Localizing and Mitigating Errors in Long-form Question Answering},

author={Rachneet Sachdeva and Yixiao Song and Mohit Iyyer and Iryna Gurevych},

year={2025},

eprint={2407.11930},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2407.11930},

}